Current Virtual machine applications, like VirtualBox and VMware, can virtualize the IOMMU (VT-x or AMD-V). Which means we can create nested virtualization. In layman's terms, we can create a VM within a VM within a VM. The question is, with an i7 and 32GB of RAM, how deep can we go?

Unblock any international website, browse anonymously, and download movies and Mp3 with complete safety with CyberGhost, just for $2.75 per month:

Caution: Do not try this at home. It's not dangerous, or anything. Just a waste of time.

To satisfy your scientific curiosity, we have documented all our attempts, including the failures. So, if you were dreaming of nested virtualization, then you have weird dreams, maybe you should talk to a professional. But you will get the answers to what's possible.

The test rig

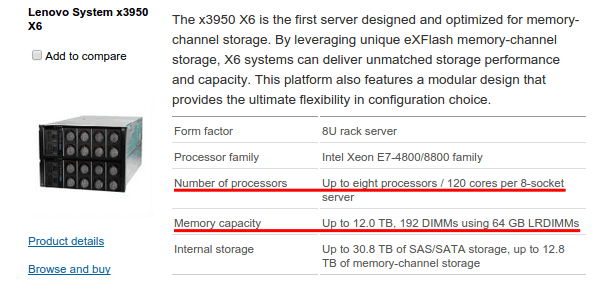

To conduct this experiment in a proper way, we should have used a Multi-CPU server.

120 physical cores and 12.0 TB of RAM would have helped a lot. Like, immensely.

Unfortunately, we forgot the $100,000 that it costs on our other pair of pants. Don't you just hate when this happens?

So, we had to do with a simple, consumer-grade PC. It has an i7 4790K, running at the stock clock speed of 4GHz. It also has 32GB of RAM, but our guess is we will run out of CPU power way before we run out of RAM.

Still, eight threads is nothing to look down at. It should give us at least a two-level nested virtualization, and maybe more if we're lucky.

Our main OS, and our nested virtualization experiment starting point is Ubuntu Linux 14.10 x64.

Nested virtualization with VirtualBox

VirtualBox is a great piece of software. It's free, most of it is open source, it's easy to use, and it does the job well. And we already saw how to install VirtualBox in Linux Mint and Ubuntu.

The first VirtualBox VM

Since we started with Ubuntu Linux, it made sense to install Ubuntu on all the nested VMs. This way, the hardware requirements would have been evenly split up.

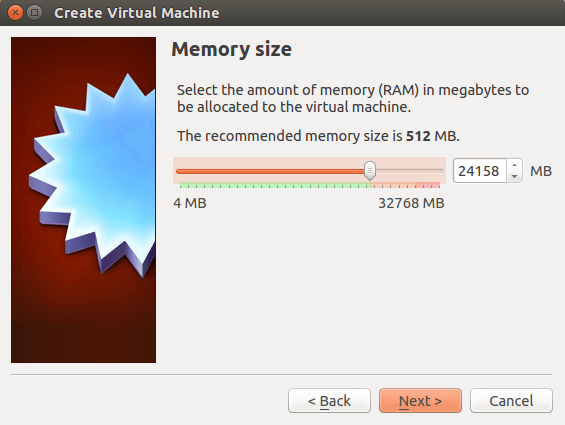

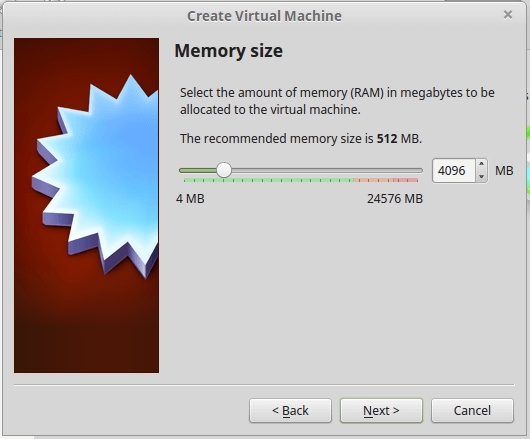

The first VM should have lots of RAM. So, we turned the slider all the way to the end of the green zone, which is the "safe zone" according to VirtualBox.

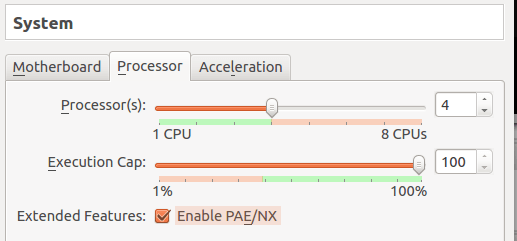

We also gave four CPU cores, which again is the healthy amount according to VirtualBox.

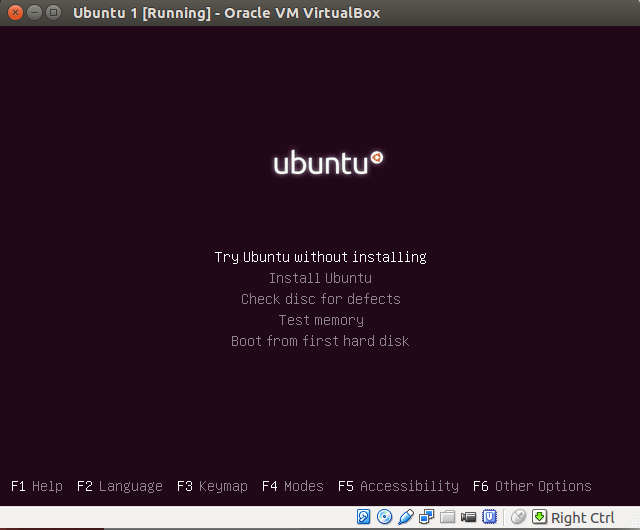

Selecting and Ubuntu 14.10 ISO as a virtual disk, we fired up our first VM. So far, so good.

And then...

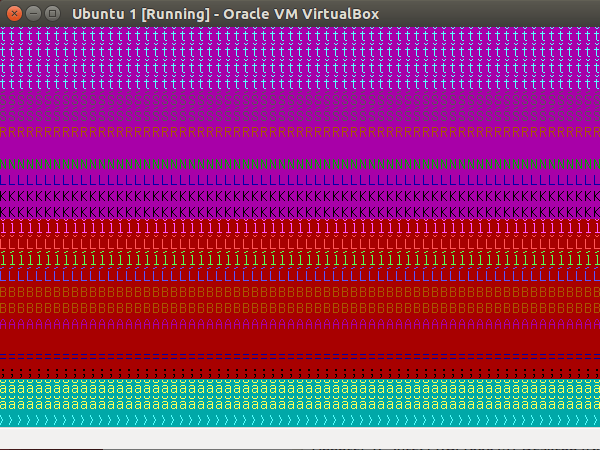

No matter what settings we tried, we couldn't even get the live Ubuntu environment to load. The best we managed was to get a cryptic message.

So, it was time for plan B.

Plan B

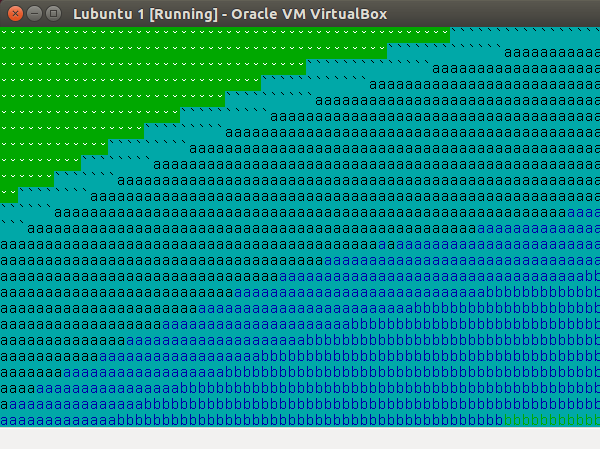

Instead of Ubuntu, we used a Lubuntu 14.10 ISO.

Since Lubuntu is a lightweight Linux Distribution, this would only help our nested virtualization experiment.

And so...

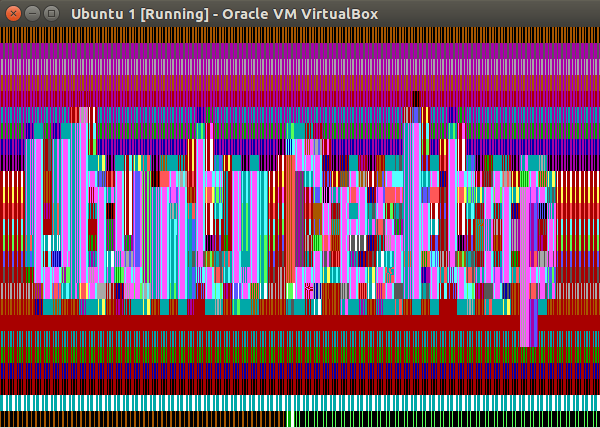

We are starting to suspect that the 14.10 version of Ubuntu just doesn't like VirtualBox. We guess that if we have kept at it, we would have managed to install at least the first VM.

However, the nested virtualization experiment didn't call for a particular distribution.

Plan C

We could probably have tried Windows, since creating a Windows virtual machine in VirtualBox is a matter of minutes.

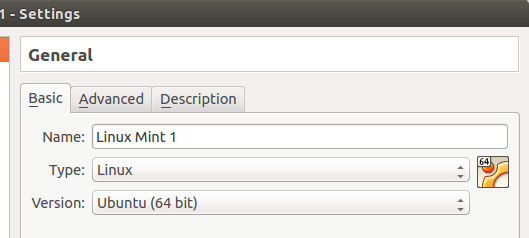

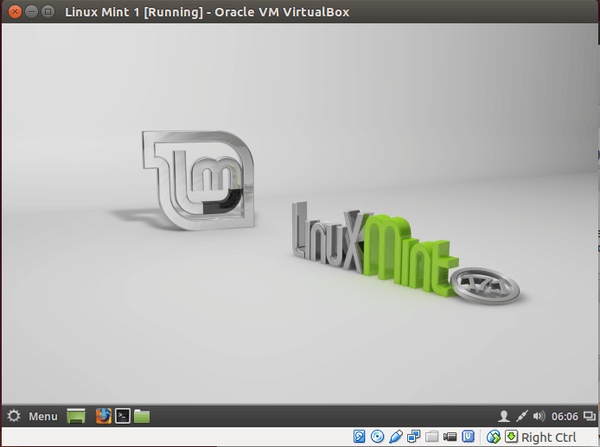

Instead, we gave Linux Mint 17.1 a try, which is based on Ubuntu 14.04 LTS.

Lo and behold, we 're live. 14.04 apparently hit the sweet spot with VirtualBox.

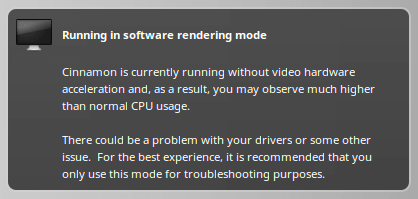

It wouldn't recognize video hardware acceleration, but that's a small price to pay.

The first nested VirtualBox VM

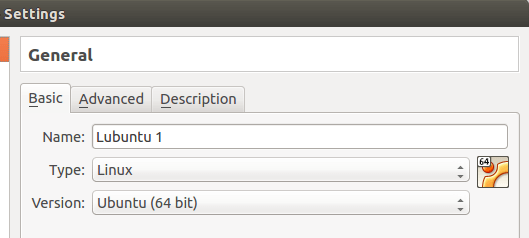

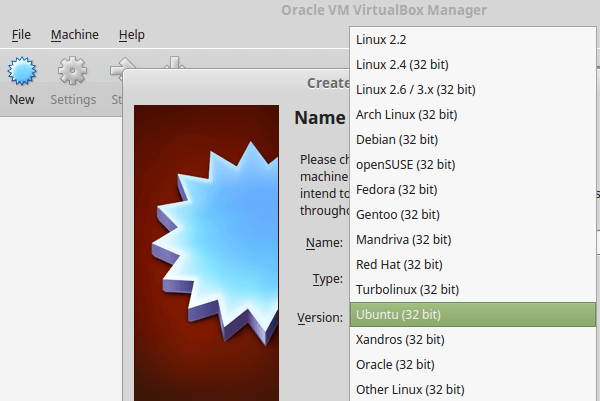

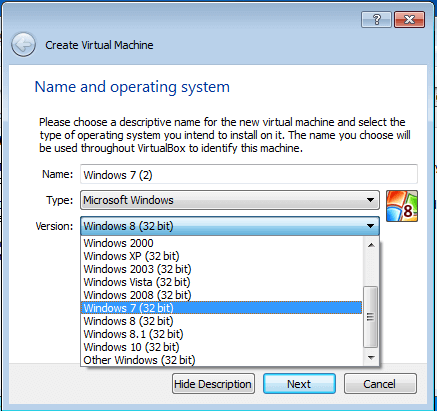

It wasn't long before we run into trouble. For the first nested virtualization VM, we were only allowed to create a 32bit system.

That meant that we could only allocate 4GB of RAM to this VM.

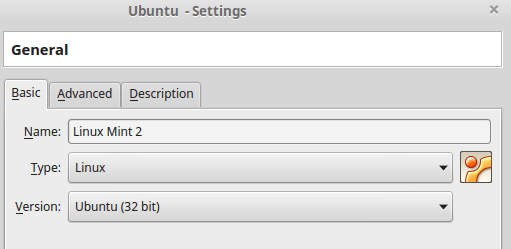

Not to be deterred, we created Linux Mint 2.

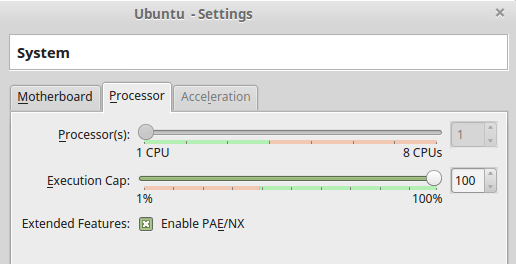

We could also allocate a single core, even though the VM had four to spare.

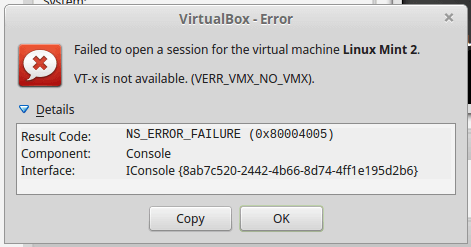

Things weren't going well for our nested virtualization experiment. And, sure enough...

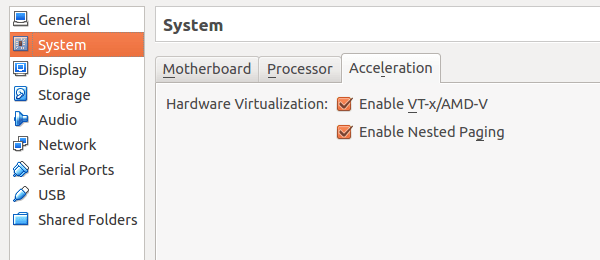

Even though we double checked that we had Hardware Virtualization activated...

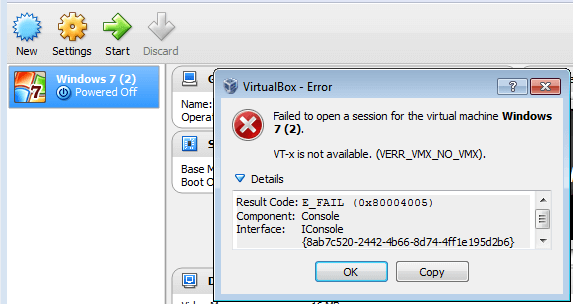

...it wouldn't work on the first nested VM.

The first VirtualBox VM (redux)

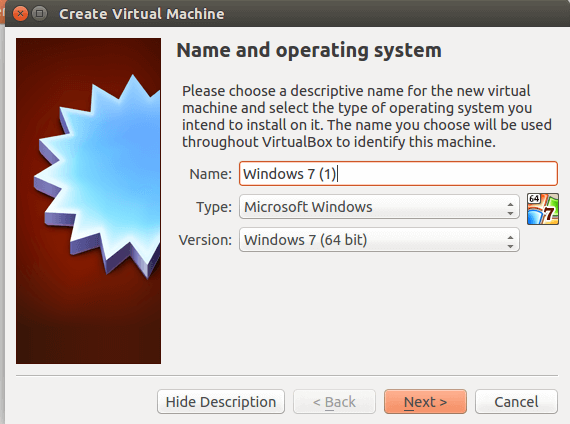

So, back to square zero. We thought that maybe it was Linux Mint's fault, so we scrapped the previous VM, and created a Windows 7 one. Having the 30-day trial ISOs from Digital River sure did help.

We didn't have any problems with the first VM.

However, we still couldn't install a 64bit VM inside the first...

...and VT-x virtualization still didn't work.

All in all, nested virtualization with VirtualBox was nothing sort of a failure. And a miserable one, at that.

So, did this mean that the nested virtualization experiment was over? Hardly.

Nested virtualization with VMware Workstation

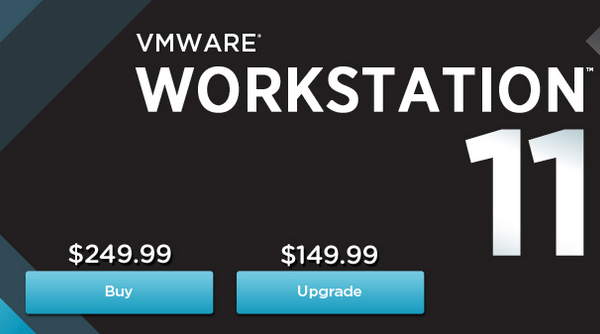

VMware Workstation is a professional solution to create virtual machines, and it costs 250$.

Here at PCsteps we don't think that "paid" necessarily equals "better" when talking about software. But, after we strung out with VirtualBox, VMware was worth a try.

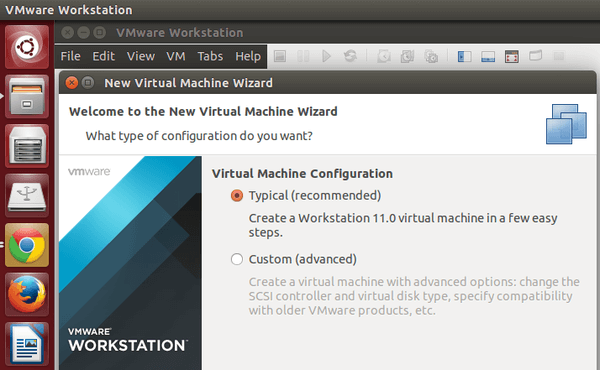

The first VMware Workstation VM

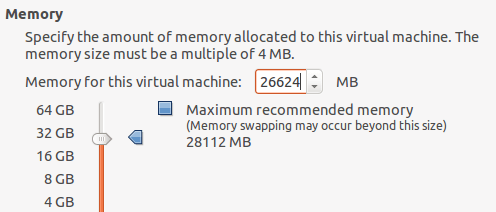

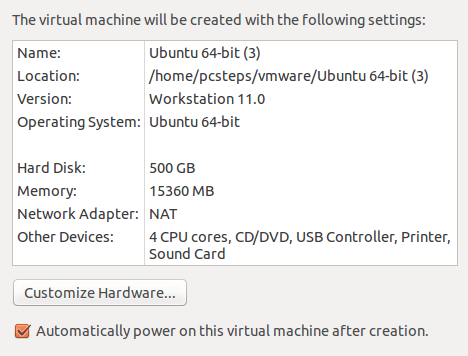

For the first VM, we allocated 26GB of RAM.

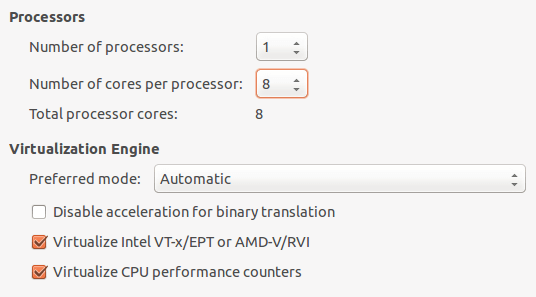

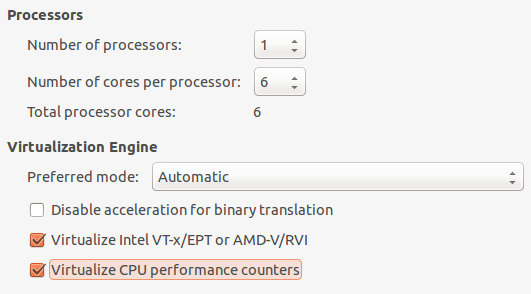

We also gave the full eight cores and made sure we had virtualized IOMMU.

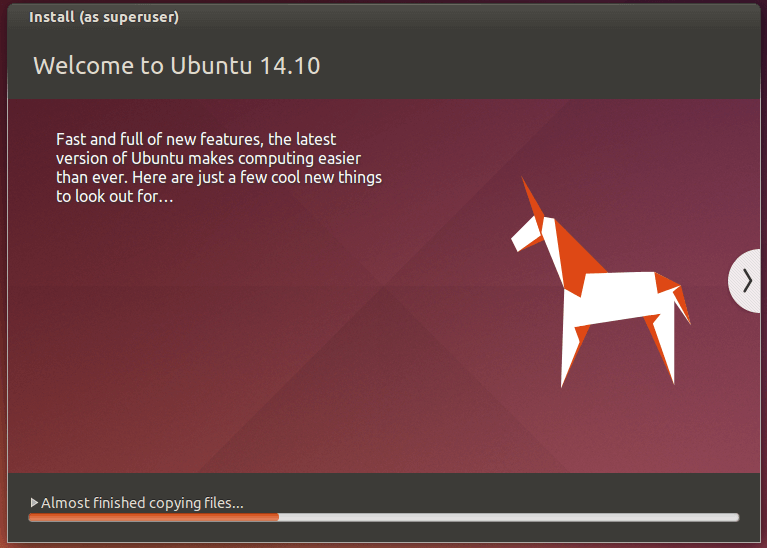

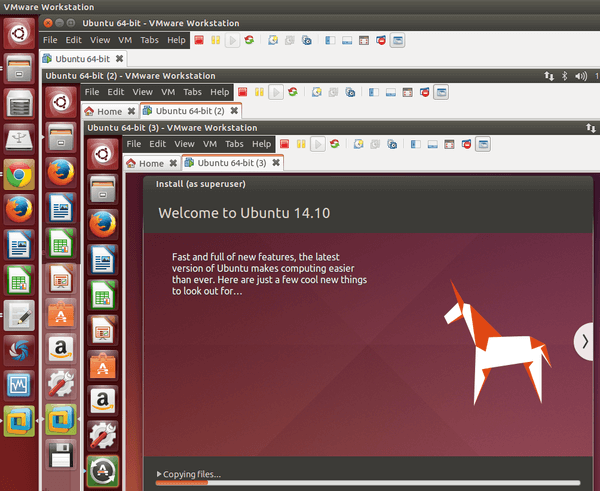

Ubuntu 14.10 installation went on without a hitch. Also, the "Easy install" feature of VMware came handy; it went through the installation completely unattended.

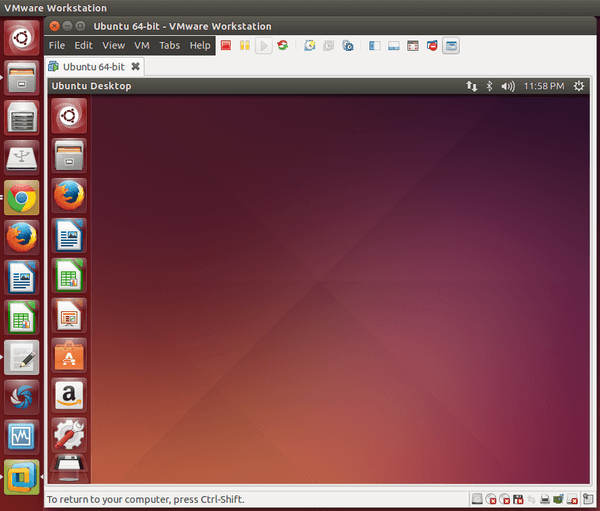

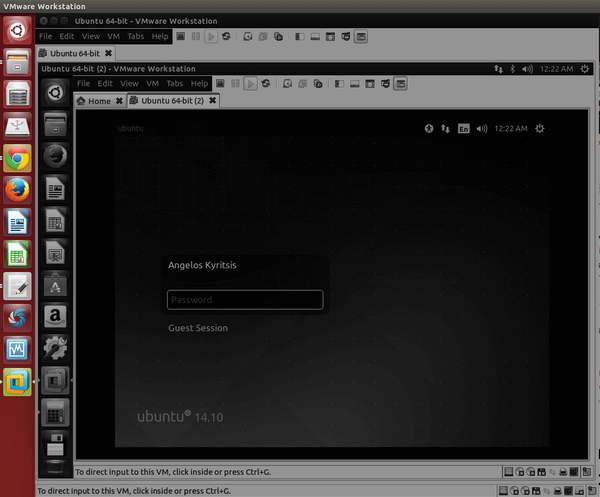

Not before long, we had our first virtual machine.

The first nested VMware Workstation VM

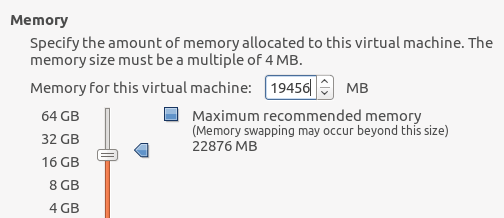

For the first nested VM, we allocated around 19GB of RAM.

This time, we gave it six cores and virtualized VT-x, of course.

That was as far as we went with VirtualBox. Fearing that it wouldn't work, and this whole article would be a bust, we started up the nested VM.

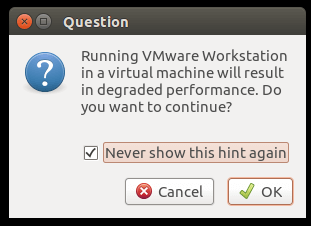

We got a warning that a VM within a VM will result in degraded performance. A much better warning than VirtualBox's error message.

Well, what do you know. It works.

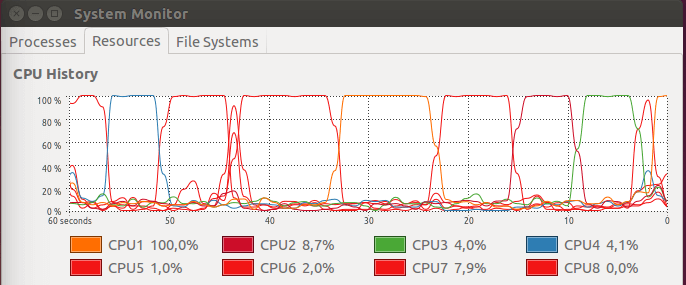

The CPU usage is peaking during the installation.

We even had VMware Workstation black-out at some point.

But then it got unstuck, and our first nested VM was live.

Officially, the nested virtualization experiment was a successful one. But now it's time to ask the serious question. How deep can we go?

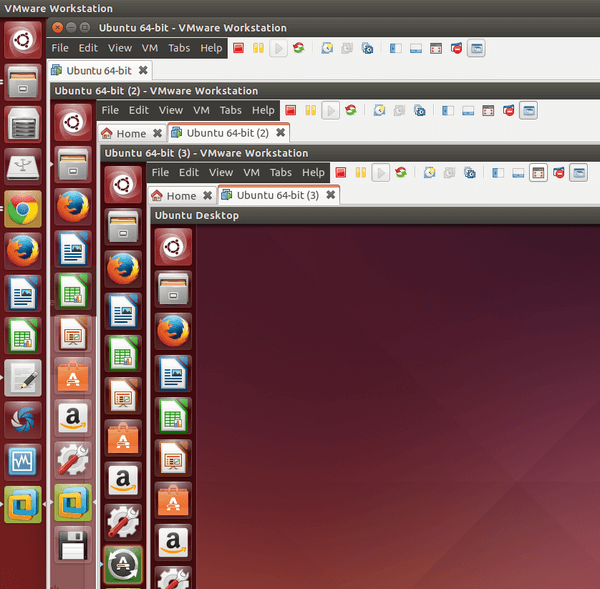

The second nested VMware Workstation VM

For the second nested VM, we allocated 15GB of RAM and four CPU cores out of the six of the first nested VM.

Not only did it work, but it went more smoothly than the previous VM. We didn't have any black-outs.

Not before long, the second nested VM installation was live.

Of course, the deeper we went, the slower everything became. But it worked, no doubt about that.

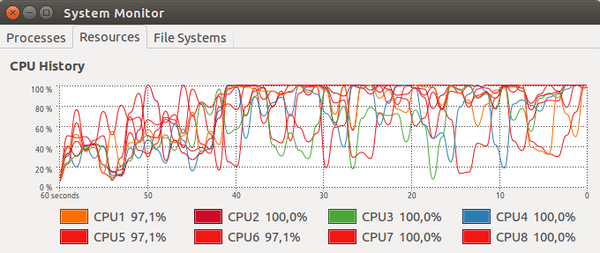

The thing is, even the simplest task, such us copying a file, would make the CPU light up like a Christmas tree.

It's abundantly clear by now that RAM will have nothing to do with the limit of the VMs. It's all a matter of CPU cores and the quality of the VT-x virtualization.

The third nested VMware Workstation VM

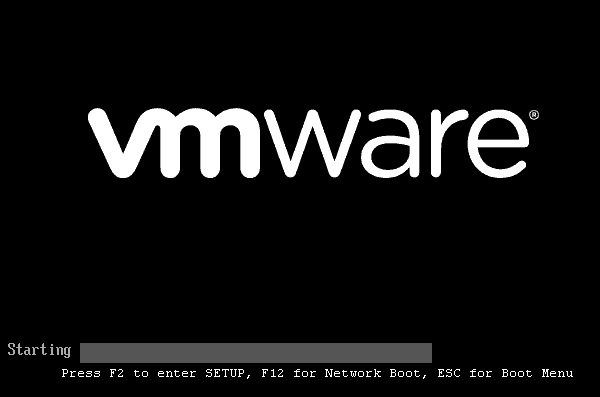

Things didn't look up for the third nested VM. VMware's logo, which usually flies by too fast to enter the BIOS with F2, now took whole minutes to load.

The CPU activity was also erratic. A single core would be at 100%, and then change places with another core.

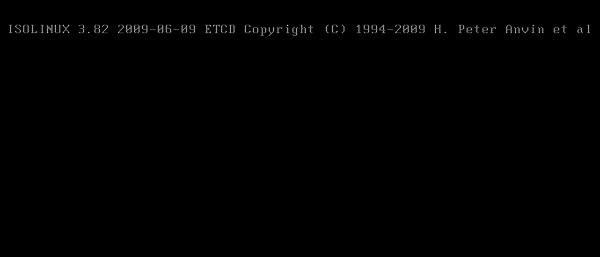

After all, the VM managed to load beyond the POST screen, but it got stuck at the ISOLINUX screen and never recovered.

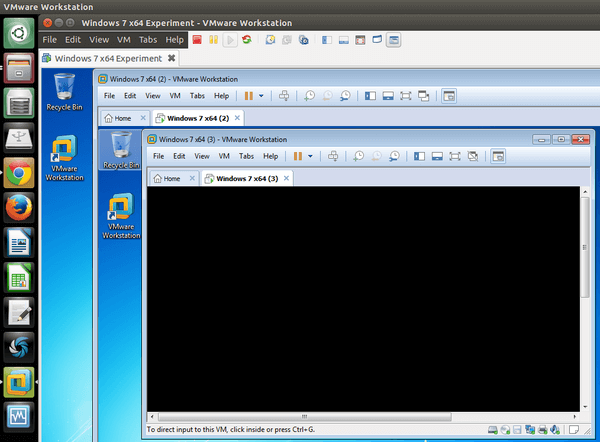

We tried to recreate the scenario with Windows 7 x64, in case it was Ubuntu's fault.

In fact, the results were worse. We could only install Windows on the first nested virtual machine, and the second would get stuck before even starting the installation.

The results

In the end, it wasn't the RAM or the CPU cores. It was probably the VT-x that couldn't handle the progressive re-virtualization.

Could an even lighter OS, such as a stripped down Arch Linux, give us a third nested VM? Possibly.

For the time being, though, a VM within a VM within a VM is impressive enough. And utterly useless, as opposed to having three separate VMs working at the same time.

Well, now you know. And knowing is half the battle.

Support PCsteps

Do you want to support PCsteps, so we can post high quality articles throughout the week?

You can like our Facebook page, share this post with your friends, and select our affiliate links for your purchases on Amazon.com or Newegg.

If you prefer your purchases from China, we are affiliated with the largest international e-shops:

Cool af

Cool article, thanks ! :D

I think all offerings are still using software virtualization beyond the first level of nesting, just that VMware is capable of 64bit software virtualization. Also, I imagine diskIO gets reaaaaaalllly horrible soon when nesting file-backed hard drives, so maybe some of us lookie-loo's should try nesting with raw-disk baked virtual storage, as this /should/ get rid of a lot of redundancy.

The solution to this seems like it might be something similar to how we solve stack exhaustion for recursive functions in programming languages. Rather than actually nesting anything, some hypervisor needs to enable 'vm promotion', where in all the parameters for the nested vm are just passed up the stack to the root hypervisor, and it understands how to recompute all these parameters so that it can directly run the VM itself, hiding the details of this promotion from users of the root hypervisor, without violating any permissions/resource quota's that they have imposed on their children.

The nature of the problem is known, and has solutions in other domains, its just a matter of software vendors recognizing that "yes, tail call optimization can be applied in some form to hypervisors".

Given that we have already applied a similar solution to the problem for top-level guests, by 'passing all the parameters up' to the hardware itself ala VT-x, its essentially just a matter of virtualization VT-x. Not to say that a real usable production solution would be easy to implement, but that the path to take is relatively clear. Its just a matter of doing it.

Thank you for your input, Jesse.

Nice article! I was recently wondering if this was possible without lots and lots of bucks (hardware mostly, but software too). Guess i'll stick with 3 parallel VM's.

Also, it's the first time i read a PC Steps article and like the tone! It's funny and objective. A rare thing to witness.

The issue is not with the hardware, the issue is with the way your doing it.

You are using Type 2 Hypervisors for something they arent really meant to do.

Go for CentOS KVM / VMware ESXi using that same hardware and you will have much

better performance and functionality. however the setup wont be easy as above.

With ESXi you can install it on hypervisor OS, and then let it host another node of Esxi which hosts VM for nested virtulization and guess what, you can virtulize in that ''guest hypervisor'' (the hypervisor installed on physical hardware is called Host Hypervisor)a Windows or Linux 64-bit without any issues.

Yes, it makes sense that ESXi would work better. Thanks for the info, I might try it next time I update the guide.

Cool.

Sorry if i sounded arrogant, it is just optimizing VM is currently my thing.

Keep an eye for CentOS with KVM with paravirtualization. It not as hard as it seems, after using ESXi, it is equal difficulty playing field.

Feel free to reply back to me in the future if you need any help with writing a future article.

Sure, will do.

True yes, with ESXi i've managed to get to level 3 nested (physical esxi, nested esxi, nested esxi, nested esxi) on 2 CPU server. It also helps to begin with hypervisor on physical.

Why didn't you try QEMU with KVM? You were using Linux after all.

It is probably worth a try. I'll keep it in mind for the next time I update the post.

On the Virtualbox error that you see, setting your RAM amount of the guest somewhere around 3GB or less should make it go away and boot the VM. Too much RAM (3.5GB or so) and the hypervisor will need more address space for all the peripherals, and thus will try to make a 64bit CPU, which will fail with the error.

Cool, i didn't know that.

The "cryptic message" is "Ubuntu Desktop"...

There was a time when I worked with the original virtual machine facility: VM/370 on IBM mainframes. I never went more than three levels deep, but I knew someone who claimed to have stacked up six levels of virtual machines. I'm not sure what hardware that was, but it would have had processors running at no more than 40 MEGA Hertz and no more than 64 MEGA bytes of RAM. The only GIGA back then were 1-GB disk drives, which occupied cabinets the size of refrigerators. When you can do that -- and only then -- you have a proper virtual machine facility. Over time, IBM moved more and more of the VM functions into the microcode.

Except its not utterly useless. I have a use case where I need to virtualize Windows 7 inside RHEL inside Windows 10.

Not usless at all. I need to run docker container within a VMware virtual machine with windows 8 guest. Normally docker would natively execute on windows 10, but for older versions of windows you need a virtualbox VM. So I need a VBox VM withing a VMware VM.

Just to add another reason to have a virtual machine within a virtual machine...I had two situations that necessitated it. 1) Was Huawei's simulator and 2) was getting older GNS3 software to work. In the first instance, Huawei's release of a cloud interface only worked on Windows 7. Anything newer and the simulator barfed. So only that release would work, and I didn't want my host machine to have all these virtual machines. I wanted to contain the connection between the Windows 7 running Huawei, and the other Windows 7 running GNS3. It worked like a charm. In the second instance, my windows 10 network sharing component got NERFED which I fully explain on my website, but to be brief, I found it easier to spin up a Windows 7 ultimate, use the loopback adapter and use the older drivers to share the cloud interface in GNS3. Again, it worked the first time and I was able to replicate it into other virtual machines for training purposes.